Thor: Ragnarok Case Study

Case Study

Marvel Studios’ Thor: Ragnarok is a visual masterpiece. Discover how Image Engine artists created the stunning environment for one of the film's pivotal narrative moments.

Marvel Studios’ Thor: Ragnarok is a visual masterpiece. Director Taika Waititi shifts focus back to the mythological side of the Marvel Cinematic Universe, guiding audiences through a galaxy-spanning action-adventure experience replete with all the neon-hued vibrancy of the Bifrost itself.

The visual effects are constant and beautiful, from the resplendent spires of Asgard to the detailed junk pits of Sakaar – and with plenty in-between, including the windswept sequences that take place atop a craggy Norwegian clifftop, delivered by the team at Image Engine.

The studio’s artists have tackled the MCU once before, creating the lush jungle landscapes of Wakanda for Captain America: Civil War. They were more than happy to dive in once again, offering environmental expertise across this pivotal narrative moment.

“It was truly great to be asked back to the MCU,” begins Dave Morley, VFX supervisor on the project. “The Marvel movies are some of the most impressive visual experiences in cinema today, not to mention the driver of many of today’s most innovative visual effects. It was a true honor to be asked back to that continuing narrative.”

Bringing Thor down to earth

Image Engine’s core sequence takes place while Thor and his duplicitous stepbrother Loki return to Midgard to search for their lost father, Odin. A number of teleportations see them uprooted from Doctor Strange’s mansion and deposited on a Norwegian cliff face, facing out towards a placid sea.

“It was a great sequence to work on – albeit one that we came onto very late in the process,” says Morley. “Our contribution to Thor: Ragnarok was very much 911 work: from award to delivery we had eight weeks to complete around 150 shots.

“Thankfully, we have a lot of past experience in quick turnaround work: our battle-tested pipeline is perfectly prepared for any challenge. We knew going into Thor that even within the time we had we could create a beautiful digital environment – and one that would visually reflect the emotional beats taking place within it.”

A storm’s a-brewin

The sequence was initially shot on-location, with the surrounding landscape obscured by blue screens. Image Engine’s artists ultimately turned the scene into a fully digital environment, creating the meadow, cliff, and skies, the latter of which transitions from clear, sunlit blue to a foreboding blanket of stormy cloud.

“That transition required a great deal of thought, as it needed to gradually build up over time,” says Murray Stevenson, CG supervisor. “To start the process we took a collection of sky plates supplied via Marvel Studios, which they’d captured for various MCU films over the years, and started piecing them together.”

In order to increase efficacy and deliver more creative iterations, Image Engine’s artists worked to automate processes related to the look and feel of the sky: “We used a lot of the rendering technologies we have available for templatizing 3D renders for animation,” explains Stevenson.

“For instance, if we needed to change the sky, we could re-render the sky and horizon projections for all the shots and regenerate them with fairly little artist intervention. Artists could just update one thing and batch run it, and push it into shots to see the results.

“Working in this automated way meant that our compositing team didn’t have to constantly re-run sky shots. They could do that in the background while they continued pulling keys and ensuring everything was super balanced for the final look. That was massively beneficial in hitting the short deadlines on this project.”

The grass is always greener

As the shoot took place over the course of a week, the meadow’s grass became increasingly trampled as time went on. The film’s narrative continuity demanded an untouched, Asgardian-esque meadow.

“We decided early on to replace the in-camera meadow with a digital version, from the immediate camera right out to the horizon,” says Stevenson.

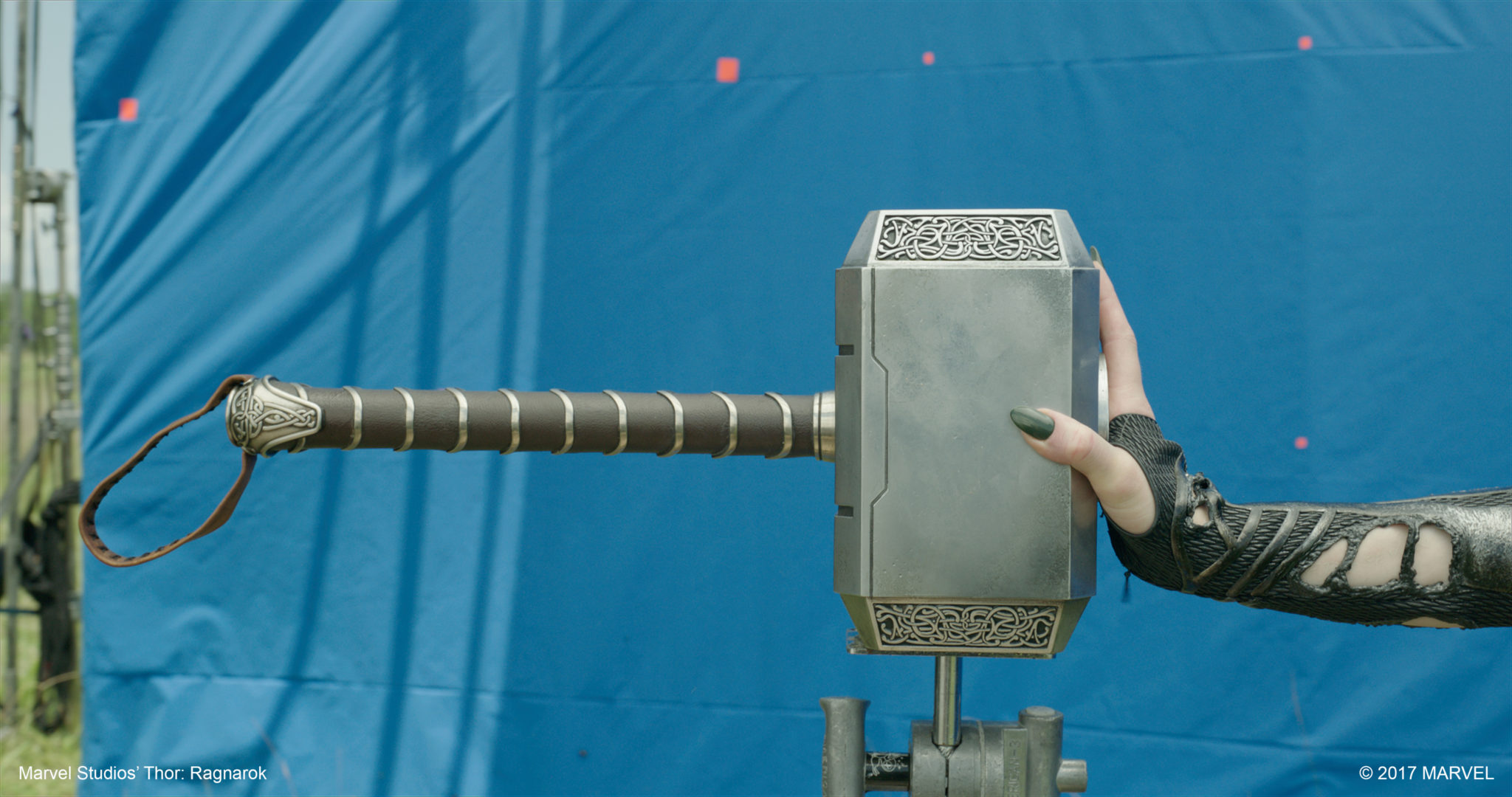

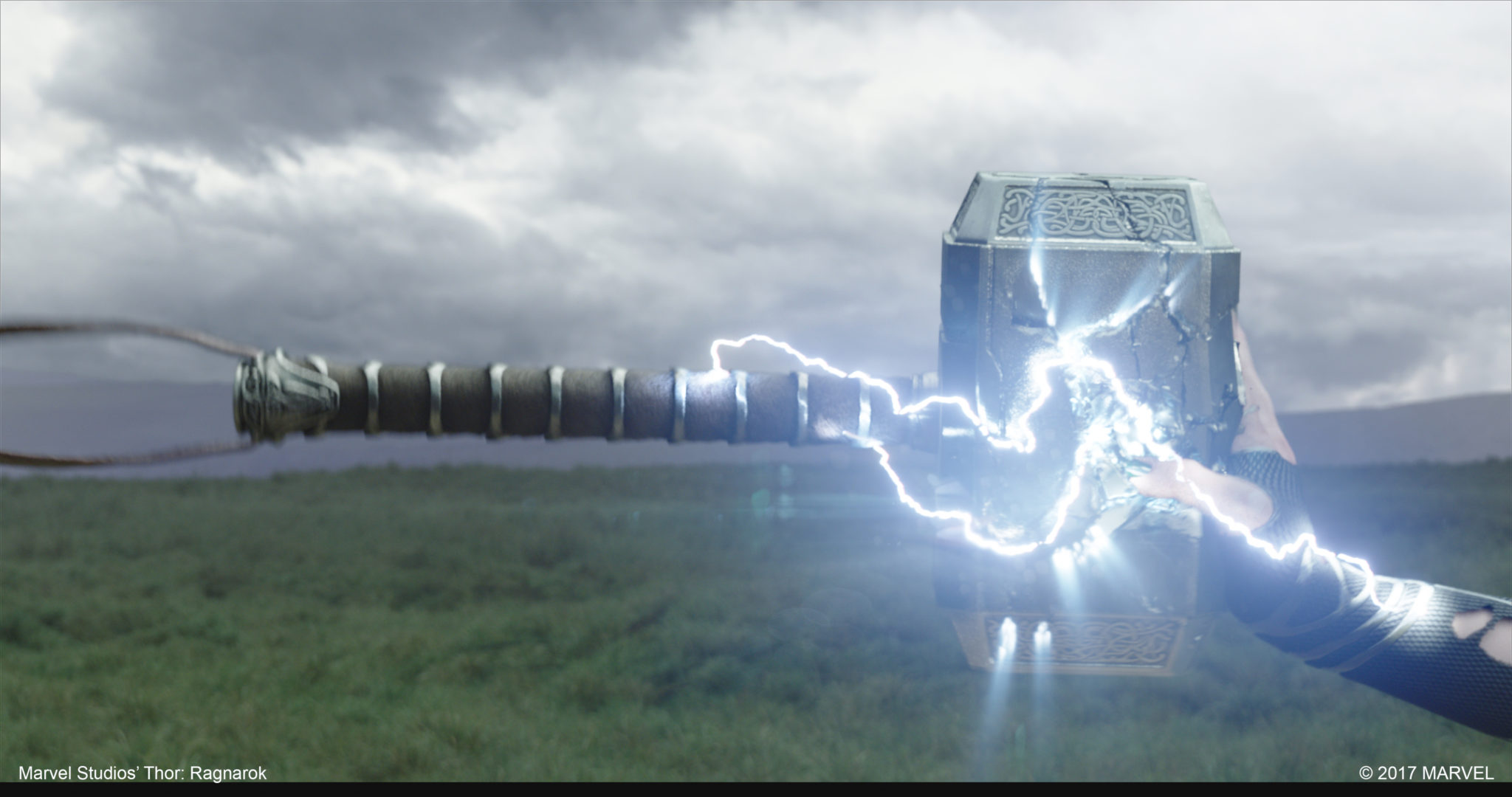

To populate this area, artists created five grass type variants, each of which had a further two or three variants within that. Each chunk was simulated to varying degrees as the emotional intensity of the sequence increased: the individual grass blades needed to move ambiently in the wind during dialogue scenes, but then respond more violently to destruction effects, such as the shockwave caused by the destruction of Thor’s hammer Mjolnir.

“If we were to treat the meadow as one giant piece of geometry, it would be entirely inefficient,” says Stevenson. “Instead we dealt with the meadow as instancing system: we had 600,000 individual strands of grass that were all driven from an initial template library of each of the grass variations. They each had a shockwave simulation, ambient wind simulation, stronger wind simulation, and so on.

“We were able to pick and choose which of those simulations were used for any point on the fields. Then at render time we could dynamically adjust those simulations, so if the grass was moving too much in a shot, rather than re-sim it with less wind, we could dynamically dial it down at render time with half the strength. All of this was doable in Gaffer at render time, which saved us a huge amount of iteration cost and time.”

Efficient technology, enhanced creativity

Automated processes such as this are vital at Image Engine, which constantly seeks new ways to improve workflow efficiency. After all, reducing complex processes means empowering artists to get on with what they do best: creating.

“The more you can robotize certain tasks the better – you want people to focus on making things look good, rather than the technical nitty gritty,” says Morley. “What’s more, alongside productivity, you also get a continuity win: when you’re automating, everything is generated from the same template, so you get a more consistent result.

“Time spent on such R&D is key, as it makes 911 work entirely feasible – we can deliver on budget and to deadline without sacrificing anything in the way of quality.”

Making an entrance

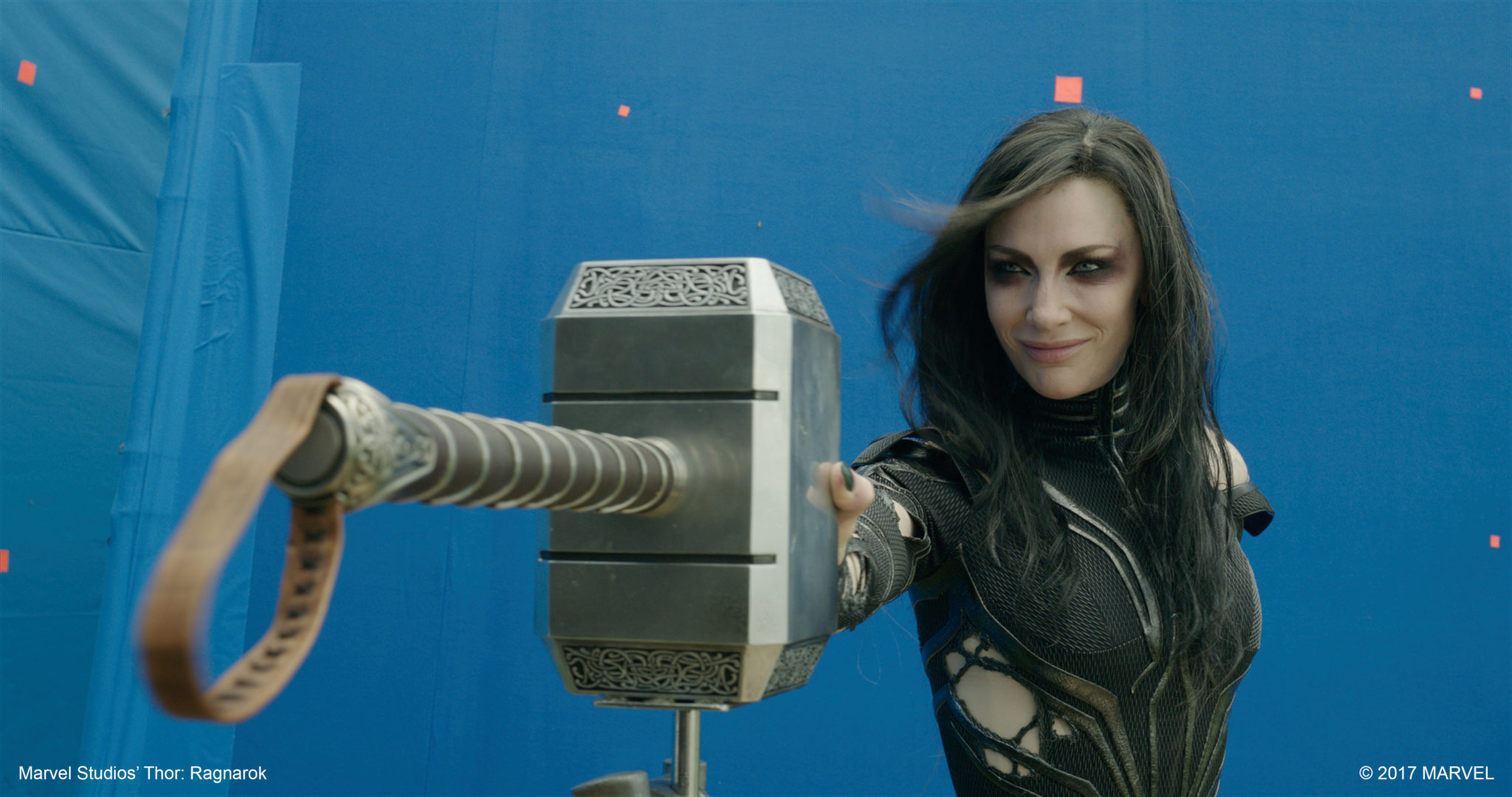

Image Engine worked on a number of other shots throughout the cliffside sequence, including the explosion of Mjolnir and the simulated portal through which Hela enters the scene, which artists based on work supplied by Rising Sun Pictures for the effect’s previous sequence incarnation.

“We took the design further in terms of its overall aesthetic, with a big focus on showing the actual opening of the portal, which hadn’t been created previously,” says Morley.

“The first shot shows a close-up of Hela’s feet moving through the portal, so we needed to add interaction between her and the simulation. This was achieved with SideFX Houdini, using animated particles converted into VDBs which give the portal its oily, thick metaball look.”

Image Engine also gave Hela’s big entrance extra grandeur in the form of her barbed, antler-like headdress, which she adopts when entering battle.

As she approaches Thor and Loki, Hela, played by Cate Blanchett, runs her hands back through her hair. As she does so her dark locks transform, the thorned crown animating out from the back of her head.

“This transition required a huge amount of work, beyond the animation of the headdress itself,” says Morley. “For starters, we needed to create a full-CG body to smooth out Hela’s suit, making it look as clean and slick as possible. The compositing team also had to completely remove Cate’s hair, as it was spread all over her front and neck in the plates. That entailed of a lot of paint cleanup, which ultimately aided the smooth transition of the cowl rising and extending from Hela’s head.”

The Marvel Cinematic Universe language

Although Image Engine owned the body of work witnessed across this pivotal sequence, Stevenson states the team was careful to stay true to the visual “language” established for the MCU in previous films: “Everything, from the look of the lightning to Doctor Strange’s Sling Ring effects, has an established look and grammar in the Marvel Cinematic Universe – it was important that we worked to match that.”

Indeed, working as part of the “MCU family” in this way is what made Thor: Ragnarok such a joy for the Image Engine team: “Marvel Studios’ films can pull in 14 vendors, because they know everyone understands and shares the same vision,” says Morley. “We received assets from vendors like Rising Sun Pictures and Method, and worked in tandem to create something engaging and consistent.

“It helps that the Marvel Studios’ team is always there, always available,” he concludes. “Every call would be with the entire executive team – Brad Winderbaum, Victoria Alonso, visual effects supervisor Jake Morrison, and of course Taika Waititi. It all makes for a great decision-making process. They’re so open to creative input, talking things through, and finding the right way to deliver on that Marvel ‘language’.

“That’s why the final results are so great,” says visual effects executive producer Shawn Walsh. “It’s the collective creative bravery of the Marvel Studios’ brass – they challenged us to constantly push and improve the sequence every day, and we couldn’t be prouder of what we achieved in such a short space of time!”